Enterprise AI Platform with Agentic RAG and AI Agents: Deployment Scenarios

Adopting AI at the enterprise level is fundamentally different from using public AI services. What works for experimentation or individual productivity does not translate into environments where data is sensitive, processes are complex, and accountability is critical. Enterprises require robust architecture, strict governance, and full control over how AI operates, accesses data, and executes actions. This is why AI cannot be treated as a simple API layer. It must be designed as a scalable, secure, and flexible system that integrates deeply with enterprise data, workflows, and permissions.

As organizations move from experimentation to real-world AI adoption, one question becomes critical:

“Where and how should AI run?”

For enterprise environments especially those handling sensitive, regulated, or high-value data – the answer is rarely “just use the cloud.” It requires a deliberate balance between control, performance, compliance, and innovation.

This is where elDoc stands apart. Built as an Enterprise AI Platform with Agentic RAG and AI Agents, elDoc is designed with architecture at its core not as an afterthought. It supports multiple deployment scenarios, from fully on-premise to hybrid and cloud-extended models, enabling organizations to align AI infrastructure with their exact security, regulatory, and operational requirements.

The result is not just AI adoption but enterprise-grade AI execution, where flexibility and scalability are built into the foundation.

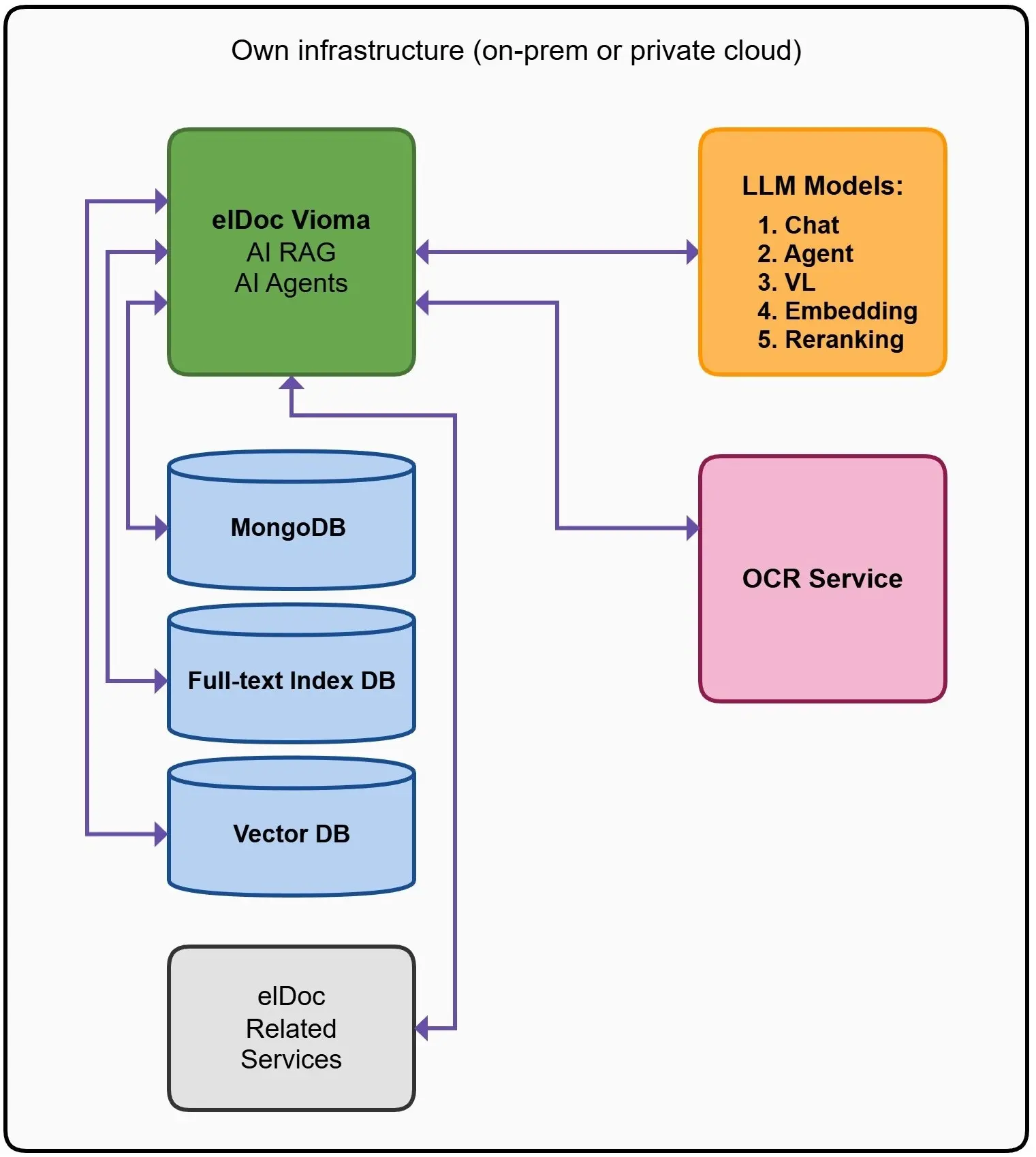

elDoc – Fully On-Premise / Private Cloud Deployment

Maximum Control. Maximum Security.

In this deployment model, the entire AI ecosystem operates within your own infrastructure either on-premise or within a fully controlled private cloud.

Architecture Overview

elDoc – the core platform orchestrating Agentic RAG and AI Agents

- MongoDB – stores structured data, document metadata, workflow states, and extracted information

- Full-Text Index Database – enables high-speed keyword-based search across large document collections

- Vector Database – powers semantic search using embeddings, allowing AI to understand meaning, not just words

- OCR Services – process scanned and image-based documents into machine-readable text

- LLM Models – deployed locally or accessed via private, secured endpoints

What these components actually do

- MongoDB acts as the operational backbone, managing all structured information – documents, extracted fields, audit logs, and process states. It ensures consistency and traceability across workflows.

- Full-Text Search allows precise retrieval based on exact terms, phrases, and document structure – critical for compliance use cases where exact wording matters (e.g., contracts, regulations).

- Vector Database enables semantic understanding. Instead of searching for exact matches, it retrieves content based on meaning – this is what powers RAG (Retrieval-Augmented Generation) and allows AI to reason across documents.

👉 Together, these layers create a hybrid retrieval system combining precision (keyword search) and intelligence (semantic search).

What this means

All data processing, AI reasoning, and agent execution remain fully contained within your environment.

- No data leaves your infrastructure

- No exposure to public AI services

- Full control over every interaction and decision

This is not just a deployment choice – it is a security architecture.

LLM Flexibility: No Vendor Lock-In

A key advantage of elDoc in this setup is complete flexibility in model selection:

- Use open-source models hosted internally

- Deploy fine-tuned private models

- Integrate enterprise-approved proprietary models

- Or implement a multi-model strategy depending on use case

👉 You are not tied to any single provider.

This means:

- Freedom to optimize for performance, cost, or accuracy

- Ability to switch models without changing architecture

- Future-proof AI strategy as models evolve

In other words:

Bring your own model or change it anytime.

Industries Where Maximum Control Is Essential

This deployment model is particularly critical for industries where data sensitivity, compliance, and control are mandatory:

Finance

- Banking, insurance, accounting

- Regulatory compliance

- Protection of financial transactions and records

Government & Public Sector

- Citizen data and national systems

- Strict data sovereignty requirements

- Jurisdictional and legal control over information

Defense & Security

- Classified and mission-critical data

- Air-gapped and isolated environments

- Zero external dependency policies

Healthcare & Life Sciences

- Patient data and medical records

- Compliance with GDPR, HIPAA, and similar frameworks

- High sensitivity of personal and clinical data

Manufacturing & Critical Infrastructure

- Intellectual property (designs, specifications)

- Supply chain and operational data

- Risk of industrial espionage

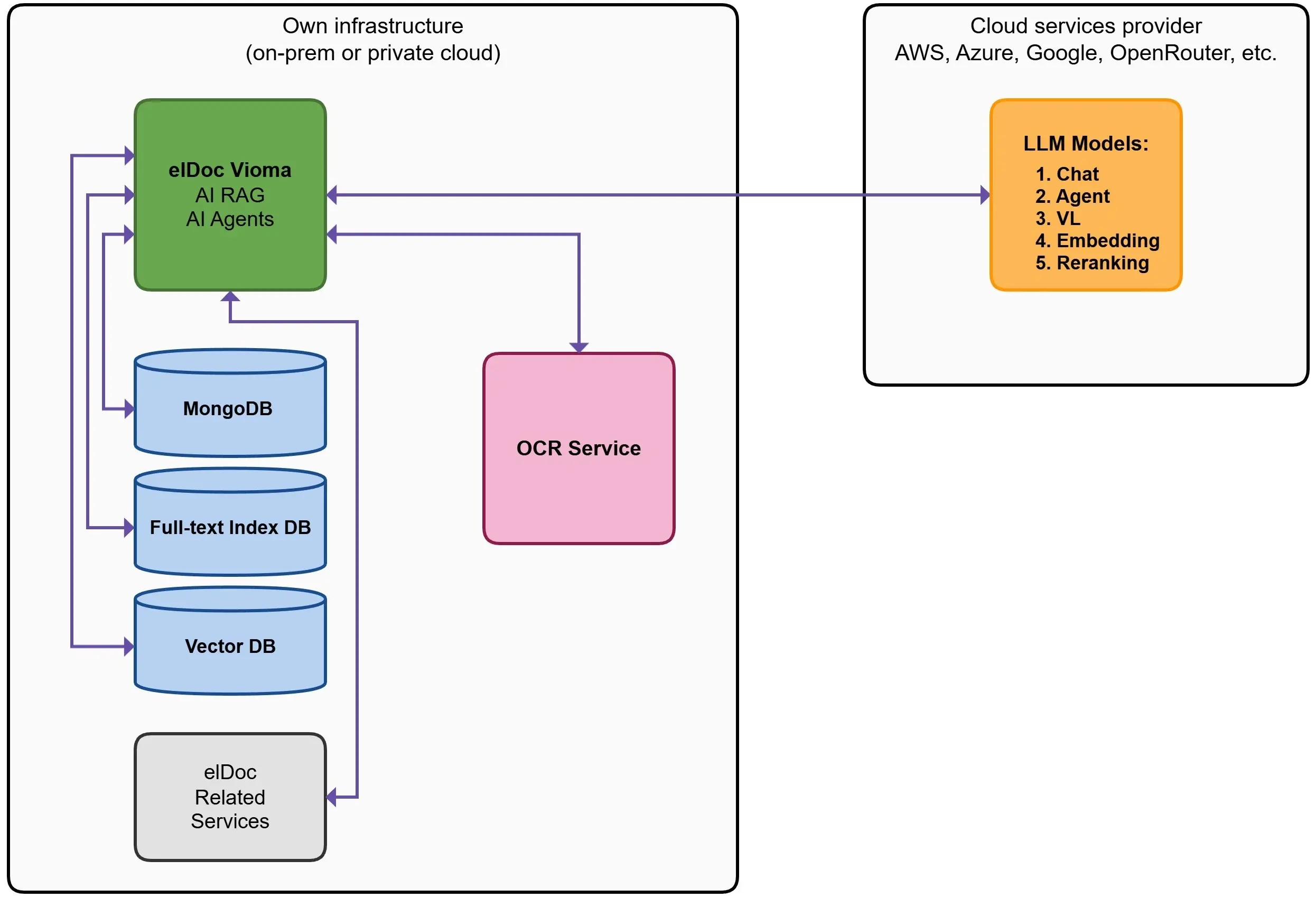

elDoc – Hybrid Deployment (On-Prem Core + Cloud AI Models)

Control Meets Flexibility

This is the most common and practical enterprise deployment scenario, combining on-premise control with cloud-based AI capabilities.

Architecture

- elDoc core platform runs on-premise or in a private cloud

- All databases remain internal (MongoDB, Full-text Index, Vector DB)

- External providers are used for:

- LLM models (Chat, Agents, Embeddings, Reranking)

- Optional AI services

What this means

Sensitive data, documents, and metadata remain fully controlled within your environment, while AI intelligence is extended through secure access to external LLM providers. In practice, only the minimum required context is shared with models – never the full dataset.

Choose Your Preferred LLM Providers as a Service

One of the key advantages of this architecture is the ability to leverage LLM providers as a service, selecting the models that best fit your business needs. Instead of committing to a single vendor or building internal AI infrastructure, organizations can can instant access to AWS (Bedrock), Azure, or Google.

Why this matters

- Cost Efficiency

No need for significant upfront investment in GPU infrastructure, model hosting, or maintenance. You pay only for what you use. - Reduced Operational Complexity

Infrastructure, scaling, and model updates are handled by the provider allowing your teams to focus on business value, not AI operations. - Access to Best-in-Class Models

Instantly leverage the latest advancements from leading AI providers without rebuilding your architecture. - Flexibility Without Lock-In

Easily switch or combine providers based on performance, pricing, or specific use cases.

“In simple terms: you avoid heavy upfront investments while gaining immediate access to powerful, enterprise-grade AI capabilities.”

Critical point: Security

Even in this hybrid setup, enterprise security remains fully enforced:

- All requests are protected via RBAC, MFA, and access policies

- Sensitive fields can be excluded entirely

- Full audit logs, monitoring, and traceability are maintained

👉 AI operates within your governance model not outside of it.

When to choose this model

This deployment is ideal when:

- You want enterprise AI quickly without heavy infrastructure investment

- Your data must remain internally controlled

- You want access to best-in-class LLMs (via AWS Bedrock or similar)

- You need scalability without operational complexity

elDoc – The Core Layer Across All Scenarios: Intelligence That Stays Consistent Wherever You Deploy

Regardless of whether elDoc is deployed fully on-premise, hybrid, or cloud-extended, the core intelligence layer remains the same. At the center of this architecture is elDoc – the orchestration engine that transforms raw data into actionable intelligence and automated execution.

What elDoc Enables

elDoc is not just a retrieval or automation tool – it is a decision and execution layer built on top of enterprise data.

Agentic RAG Orchestration

Coordinates retrieval, reasoning, and action into a single intelligent workflow. Instead of isolated queries, elDoc builds end-to-end execution chains.

Multi-Step Reasoning Across Documents

Analyzes information across multiple sources, connecting context, validating relationships, and building a coherent understanding before producing results.

AI Agent Execution

Moves beyond answers. AI agents can trigger actions, perform actions such as data extraction or classification or file organization, validate outputs, and complete tasks—turning insights into outcomes.

End-to-End Document Processing with Validation

elDoc supports complete end-to-end document processing workflows, from ingestion to final action:

- Intelligent document ingestion and classification

- AI-driven data extraction

- Built-in human-in-the-loop capabilities for review, approval, and exception handling

This ensures that outputs are not only automated but also accurate, auditable, and business-ready.

AI Data Extraction with Structured Output

Using advanced AI models (LLMs + visual models), elDoc enables:

- High-precision data extraction from structured, unstructured, scanned, and image-based documents

- Built-in AI OCR capabilities that instantly convert documents into machine-readable text as part of the processing pipeline

- Seamless handling of complex layouts, multi-format documents, and low-quality scans

Once processed, data becomes immediately available in structured formats, such as: JSON, CSV. This allows seamless integration with downstream systems like ERP, and analytics platforms, eliminating manual data entry and significantly accelerating processing cycles.

Cross-Document Analysis & Validation

Identifies inconsistencies, risks, and gaps across documents such as invoices, contracts, and specifications – something traditional systems cannot do reliably.

Enterprise System Integration

Seamlessly connects with ERP, BPM, and other business systems enabling AI to operate within real business workflows, not outside them.

Enterprise AI Without Compromise: Flexibility by Design

In today’s enterprise landscape, AI adoption cannot follow a one-size-fits-all approach. Organizations operate under different regulatory pressures, infrastructure strategies, and risk profiles and their AI platforms must reflect that reality.

elDoc is built precisely for this challenge. As a highly flexible Enterprise AI and Agentic RAG platform, it is designed to support the full spectrum of deployment needs without forcing trade-offs between security, performance, or innovation.

At one end, elDoc can operate in fully isolated, on-premise or air-gapped environments, where data never leaves the organization and every process is tightly controlled. This makes it ideal for sectors where security, compliance, and sovereignty are absolute requirements. At the other end, it enables hybrid and cloud-extended architectures, allowing organizations to leverage the latest advancements in AI such as external LLM providers while still maintaining strict enterprise controls, access governance, and data protection mechanisms.

What sets elDoc apart is that this flexibility does not come at the cost of consistency. Across all deployment models, organizations benefit from the same core intelligence layer, security framework, and orchestration capabilities.

The result is a platform that allows enterprises to:

- Align AI deployment with their security and compliance requirements

- Scale from controlled environments to more flexible architectures over time

- Avoid vendor lock-in and retain full control over data and models

- Adopt AI confidently knowing it operates within their governance framework

elDoc ensures that AI is not just powerful but deployable, controllable, and sustainable at enterprise scale.

Let's get in touch

Explore how elDoc fits your enterprise architecture and schedule a personalized demo today

Get your questions answered or schedule a demo to see our solution in action — just drop us a message