The Architecture Behind Agentic RAG in elDoc for Enterprise Document Intelligence

Enterprise organizations do not need AI that only chats. They need AI that can understand a task, search across documents and data, connect information from different sources, reason through what it finds, and help complete real work in a secure environment. That is where Agentic RAG becomes important.

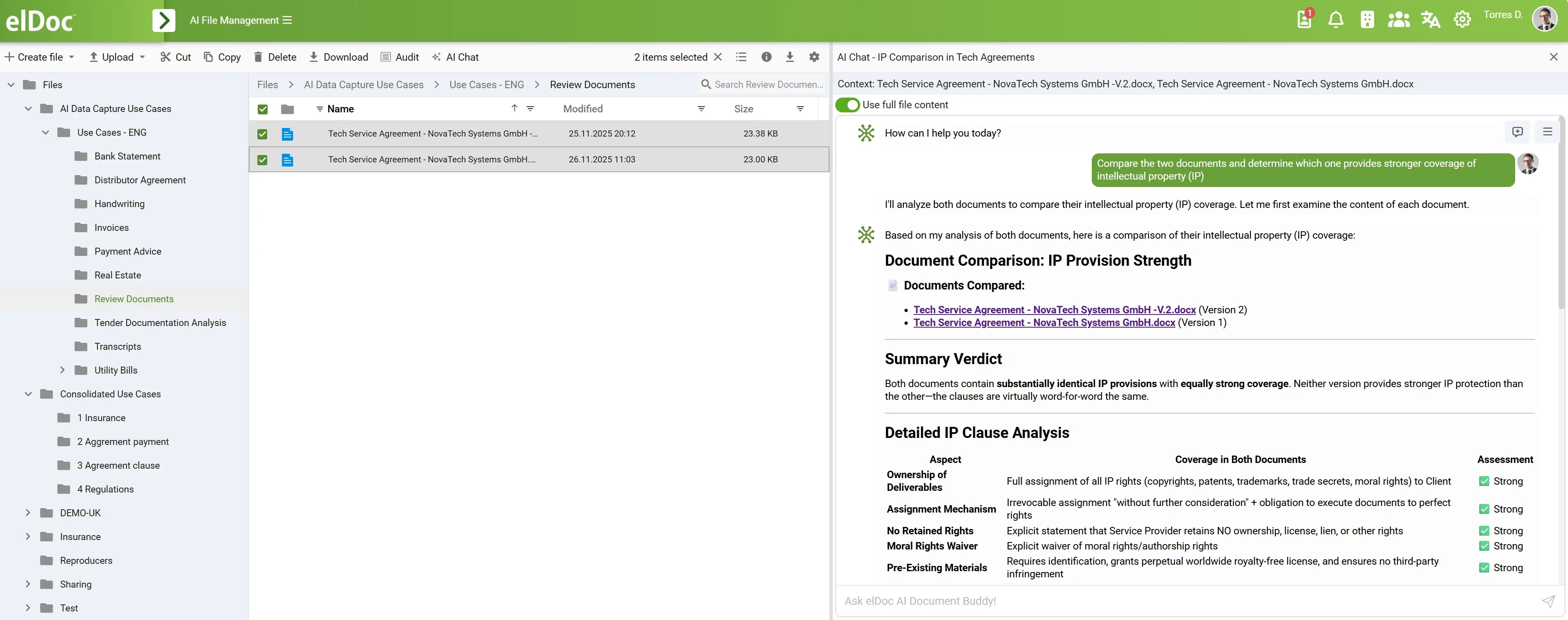

In elDoc, Agentic RAG is not just about retrieving a few relevant text passages and asking a language model to summarize them. It is about orchestrating a full AI pipeline where multiple technologies work together: document storage, text extraction, keyword search, vector search, reasoning models, and AI agents that can plan and execute multi-step tasks. This combination allows elDoc to move beyond passive document access and toward active, task-oriented intelligence for enterprise content.

What Agentic RAG Means in Practice

Traditional document search helps users find files. Basic AI chat helps users ask questions. Basic RAG improves answers by grounding them in retrieved content.

Agentic RAG goes one step further: it enables the system to interpret the user’s request, decide how to solve it, perform multiple retrieval and reasoning steps, validate what it finds, and assemble a useful final result. Instead of relying on a single search-and-answer step, the system can follow a process closer to how a skilled analyst works:

It first understands the request, determines what information is needed, identifies where that information is likely to be found, retrieves relevant evidence, analyzes it, checks whether the evidence is sufficient, runs additional searches when necessary, and then produces a final answer, summary, report, or action-ready output.

That is the core of how Agentic RAG works in elDoc.

The elDoc AI Pipeline

The power of Agentic RAG in elDoc comes from the way several specialized components work together as one coordinated system. Each component has a different role, and the value comes from how they complement each other.

MongoDB – Document Storage

At the foundation of the pipeline is MongoDB, which serves as the document storage layer. Enterprise document environments are rarely simple. Documents may come in different formats, from different departments, with different structures, metadata fields, lifecycle states, and security classifications. A flexible and scalable storage layer is essential to manage this complexity.

MongoDB supports this need by providing a structure that can store not only the document itself, but also the surrounding context that makes the document useful for AI workflows. This can include metadata such as document type, department, author, date, version, business process reference, access controls, extracted text, chunk structure, indexing information, and links to related records.

This is important because Agentic RAG does not operate only on raw text. It also needs context. For example, when a user asks for policy gaps, compliance inconsistencies, contract obligations, or process risks, the system may need to know not only what the text says, but also which version of a document is current, which department owns it, whether the file is approved, and how it relates to other content.

MongoDB provides the structured and semi-structured data foundation that makes those richer workflows possible.

Apache Solr – Full-Text Search

Not every enterprise question should be solved with vector search alone. In many real business scenarios, exact terms, names, codes, references, dates, identifiers, legal phrases, or domain-specific terminology matter a great deal. That is why Apache Solr plays a critical role in the elDoc pipeline.

Solr provides robust full-text search capabilities that make it possible to locate documents and passages using keyword relevance, exact phrase matching, filtering, faceting, metadata constraints, and ranking logic. This is particularly valuable in enterprise contexts where users may search for:

- contract numbers

- invoice IDs

- project codes

- employee names

- policy terms

- legal clauses

- technical terminology

- department-specific language

For example, if a user asks for all references to a specific vendor, policy number, or regulatory requirement, full-text search may be the fastest and most reliable retrieval method. Solr helps the system find these explicit references with precision. In Agentic RAG, this matters because the agent can decide whether a user’s request is best served by precise keyword retrieval, semantic retrieval, or a combination of both. Solr becomes one of the core tools the agent can use to ground its work in highly relevant content.

Qdrant – Vector Search

While full-text search is excellent for exact matches, enterprise users often ask questions in natural language that do not map neatly to the exact wording stored in documents. This is where Qdrant, the vector search layer, becomes essential. Vector search enables elDoc to retrieve information based on semantic similarity, not only exact word overlap. This means the system can find relevant content even when the wording in the document differs from the wording in the user’s question.

For example, a user might ask:

- “What are the main delivery risks in this contract?”

- “Are there any hidden obligations for the supplier?”

- “Show me where responsibilities are unclear.”

- “Which policies describe approval limits?”

The relevant documents may not use these exact phrases. Instead, they may contain related wording such as service commitments, liability clauses, escalation responsibilities, delegated authority, or exception approval requirements. A purely keyword-based search could miss important evidence. Vector search helps recover that context.

In elDoc, Qdrant supports deeper semantic retrieval across chunks of enterprise documents. This allows the system to surface meaningfully related passages and enrich the evidence set that the agent analyzes.

This is especially useful in complex use cases such as:

- cross-document analysis

- policy and compliance review

- contract intelligence

- operational risk identification

- due diligence

- knowledge discovery

- enterprise Q&A over diverse content

OCR Engines – Text Extraction

Before documents can be searched, analyzed, or reasoned over, their text must first become machine-readable. In enterprise environments, this is not always straightforward. Many important documents are stored as scanned PDFs, image-based files, signed forms, paper archives, or document exports with poor text structure. Without extraction, these files remain invisible to AI.

That is why OCR engines are a vital part of the elDoc AI pipeline.

OCR, or Optical Character Recognition, allows elDoc to extract text from scanned or image-based documents so that the content can be indexed, chunked, searched, and used in downstream AI workflows. This step is far more important than it may appear. The quality of text extraction directly affects the quality of retrieval, reasoning, and final outputs. If text is missing, broken, misread, or poorly segmented, downstream AI will have weaker evidence to work with.

In practice, OCR enables elDoc to expand AI coverage across real enterprise archives instead of limiting intelligence to only digitally generated files. It helps bring historical records, paper-based workflows, signed documents, legacy scans, and operational attachments into the same searchable and analyzable knowledge layer.

That means more complete retrieval, better context, and stronger business value.

LLM Models – Reasoning and Generation

Once relevant information has been found, the next step is not merely to repeat it. The system must interpret, compare, synthesize, explain, and generate a useful response. This is the role of the LLM layer. Large language models in elDoc are used for reasoning and generation. They help transform retrieved evidence into outputs that are understandable and actionable for users.

Depending on the task, this may include:

- answering a question based on retrieved documents

- summarizing findings across multiple sources

- identifying inconsistencies or missing information

- comparing clauses, policies, or versions

- extracting obligations, risks, dates, or responsibilities

- drafting a report or structured output

- explaining results in plain language

The key point is that the model does not operate in isolation. In Agentic RAG, the LLM is grounded in the retrieved content and becomes part of a broader workflow controlled by the agent.

This matters because enterprise users do not need generic text generation. They need trustworthy outputs based on authorized and relevant content. The LLM provides interpretation and language capability, while the retrieval and agent layers ensure the reasoning process is anchored in evidence.

AI Agents – Planning and Task Execution

The defining layer in Agentic RAG is the AI agent. An agent does not simply answer from a single prompt. It works more like an intelligent coordinator. It interprets the user’s goal, determines what steps are needed, chooses the right tools, evaluates intermediate results, and decides whether additional retrieval or analysis is necessary before producing the final response.

This is what transforms a standard RAG system into an Agentic RAG system.

In elDoc, the agent can orchestrate multiple stages of work such as:

- understanding the user’s intent

- selecting search strategies

- combining keyword and semantic retrieval

- validating whether the retrieved evidence is sufficient

- triggering follow-up searches if gaps remain

- aggregating findings across documents

- structuring the output in a way that matches the task

Instead of a one-shot interaction, the system becomes a guided workflow engine for knowledge tasks.

This is especially powerful in enterprise use cases where requests are rarely simple. A user might ask to identify process risks, compare contract versions, find conflicting policies, analyze a vendor file, detect missing approvals, or summarize evidence for a management report. These tasks require planning, iteration, and context-aware reasoning. The agent is what makes that possible.

Why Multi-Step Reasoning Makes Agentic RAG Powerful

The real strength of Agentic RAG lies in multi-step reasoning.

Many enterprise tasks cannot be solved reliably through a single prompt and a single retrieval step. Important information may be scattered across multiple documents. Key risks may be implied rather than explicitly stated. Critical evidence may only become relevant after initial findings point toward another source.

Multi-step reasoning allows elDoc to approach the problem dynamically. Instead of assuming the first retrieved passages are sufficient, the system can think through the task, gather evidence in stages, refine its search, and build a more complete answer. This makes it much better suited for complex knowledge work such as:

- compliance analysis

- contract review

- audit support

- policy alignment

- due diligence

- process analysis

- enterprise knowledge discovery

- document-driven investigation

In other words, Agentic RAG is powerful because it allows AI to behave less like a static chatbot and more like an intelligent assistant that can work through a problem.

Agentic RAG must deliver both efficiency and security – in elDoc, this is built into the architecture

While multi-step reasoning is what makes Agentic RAG powerful, it also introduces a critical requirement: security at every step of the workflow.

In elDoc, Agentic RAG is designed not only to understand, retrieve, and reason across enterprise documents but to do so strictly within a controlled and secure environment.

Every time a user submits a request, the system does not simply begin searching. Instead, elDoc first verifies who the user is and what they are allowed to access. This ensures that all subsequent actions: retrieval, analysis, and response generation – are fully aligned with enterprise access policies.

At the core of this approach is Role-Based Access Control (RBAC), which defines what data each user can see and interact with. The AI agents in elDoc inherit these permissions and operate strictly within them. They cannot retrieve, analyze, or generate outputs based on information the user is not authorized to access.

This means that:

- every query is permission-aware

- every retrieval is access-controlled

- every response is grounded only in authorized content

However, security in elDoc goes far beyond RBAC.

The platform incorporates a multi-layer enterprise security model, ensuring protection across identity, data, infrastructure, and operations:

- Identity and Access Security: Multi-Factor Authentication (MFA), One-Time Passwords (OTP), and integration with enterprise identity systems ensure that only verified users can access the platform

- Granular Permissions and Controls: Fine-grained access policies down to document and data level, with strict enforcement during every AI interaction

- Monitoring, Logging, and Auditability: Full traceability of user actions, enabling transparency, compliance, and governance

- Secure Deployment Options: Support for on-premise, private cloud, or hybrid environments, ensuring full control over where data is stored and processed

- Isolated Environments: The ability to run elDoc in fully isolated infrastructures, critical for organizations handling sensitive or confidential data

- High Availability and Disaster Recovery: Enterprise-grade reliability with resilient architecture, ensuring continuity of AI-driven operations

This layered approach ensures that as AI becomes more capable through multi-step reasoning, it does not introduce additional risk.

In elDoc, AI does not bypass security — it enforces it.

From the moment a question is asked to the final generated output, every step of the Agentic RAG workflow is continuously validated against enterprise policies. This guarantees that organizations can leverage advanced AI across their documents and data while maintaining full control, compliance, and protection.

Let's get in touch

Explore Agentic RAG with elDoc. Request Demo or Community Version

Get your questions answered or schedule a demo to see our solution in action — just drop us a message